AI Summary

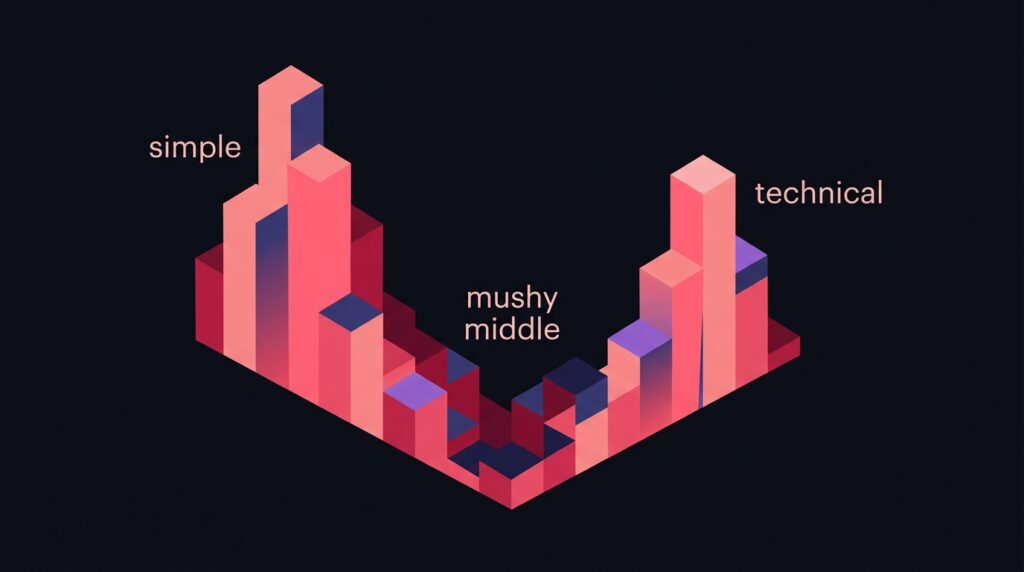

TLDR: Across 11,411 cited sentences scored with textstat, AI Mode readability splits into two peaks instead of a normal bell curve: 23.5% Very Easy (Flesch 90 to 100) and 21.3% Very Confusing (Flesch under 30). The middle ground (Fairly Difficult, Flesch 50 to 59) is the least cited tier at just 5.0%. The lesson: write either plain-language explainers or dense technical content. Corporate jargon, hedged language, and middle-of-the-road prose are the worst-performing register in AI search.

The bimodal distribution

Original research from our 42,971 citation analysis study scored cited sentences across Flesch Reading Ease, Flesch-Kincaid Grade Level, Gunning Fog Index and Coleman-Liau. Headline numbers:

- Mean Flesch Reading Ease: 53.2

- Median Flesch Reading Ease: 62.8

- Standard deviation: 50.3 (huge)

- IQR: 32.6 to 83.3

That standard deviation is the giveaway. A normal-distribution dataset would not span 51 Flesch points in the IQR. The actual distribution has two clear peaks:

- Very Easy (Flesch 90 to 100): 23.5% of citations

- Easy (80 to 89): 8.7%

- Fairly Easy (70 to 79): 6.6%

- Standard (60 to 69): 12.7%

- Fairly Difficult (50 to 59): 5.0% (the valley)

- Difficult (30 to 49): 22.1%

- Very Confusing (under 30): 21.3%

Why the middle loses

Sentences in the Fairly Difficult bucket (Flesch 50 to 59) tend to share three characteristics:

- Corporate hedging language. ‘Solutions designed to optimise stakeholder outcomes through innovative methodologies.’ This reads professional but conveys nothing extractable.

- Mid-length compound sentences. 12 to 18 words with one main clause and one subordinate clause. Too long to be atomic, too short to convey real depth.

- Vague qualifier stacking. ‘Often, in many cases, businesses may find that…’ Multiple hedges in a row signal low confidence to the extraction algorithm.

None of these get cited. The valley between simple and technical is where most B2B blog content lives, which is why so many companies struggle to earn AI citations despite ranking organically.

Match readability to query intent

The right register depends on the query. Health symptom queries pull from plain-language explainers (Healthline, Cleveland Clinic). Technical queries pull from academic content (PubMed, Stack Overflow, official docs). The same article cannot win both.

- Consumer-facing query (e.g. ‘what does metformin do’): target Flesch 80+. Short sentences, common words, direct definitions.

- Specialist query (e.g. ‘mechanism of action of metformin’): target Flesch under 40. Use precise technical vocabulary, named pathways, citation-rich prose.

- Buyer comparison query (e.g. ‘best CRM for SaaS’): target Flesch 60 to 70 with structured comparisons. This is the one place the middle works.

Audit your existing content for register

Run a textstat scan over your top 50 highest-traffic posts. Histogram the Flesch scores. If most of your content lands in the 50 to 65 range, you are sitting in the citation-poor middle and should split your editorial direction.

Practical action: classify each post as either ‘plain explainer’ (target 80+) or ‘technical depth’ (target under 40) based on the query intent it serves. Then rewrite the opening 3 paragraphs to match the target register, since those are the highest-probability extraction zone (see the top-35% rule).

The grade-level data tells the same story

Flesch-Kincaid grade levels show identical bimodality:

- Elementary (4th grade or below): 38.0%

- Middle School (5 to 6): 12.8%

- Junior High (7 to 8): 12.3%

- High School (9 to 10): 13.8%

- Senior High (11 to 12): 1.9% (the valley)

- College+ (13+): 21.1%

The Senior High register at 1.9% is the most underperforming bucket. This is the reading level of typical corporate marketing copy: too long for plain English, not technical enough for specialists. Avoid it.

Frequently Asked Questions

Should I just write at a 4th-grade level for everything?

How do I measure Flesch scores at scale?

textstat.flesch_reading_ease(text). Install with pip install textstat. Run it over your post bodies in batch and histogram the results.Does this apply to ChatGPT and Perplexity?

Is there a register that works across all query types?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Get a register audit of your top 50 posts.