AI Summary

Perplexity’s source selection and growth trends, growing 20% month-over-month, with a stated target of 1 billion queries per week. Perplexity’s users are research-oriented and high-intent. Getting cited there is one of the highest-leverage GEO bets you can make in 2026.

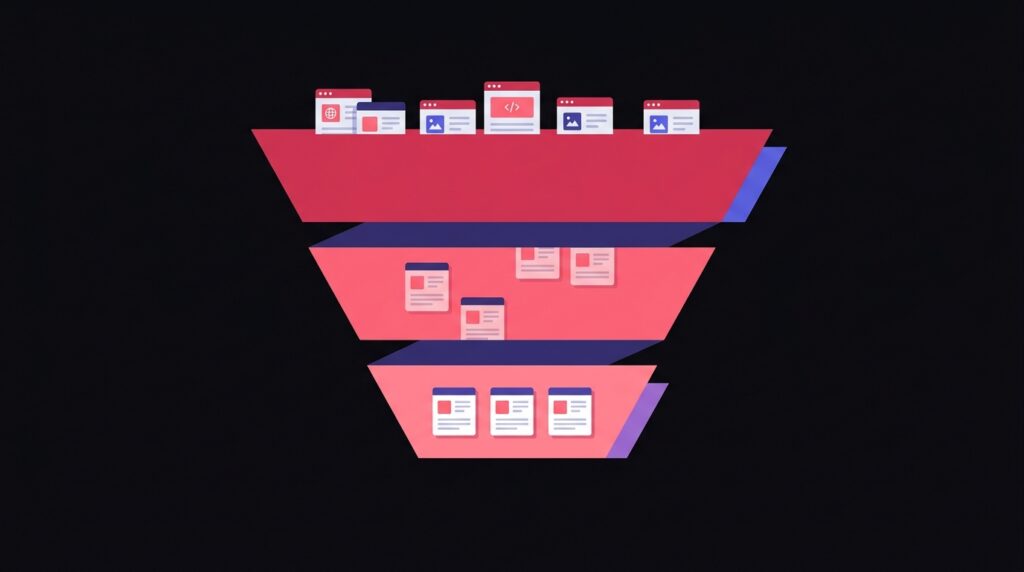

Perplexity’s three-layer reranking, explained

Perplexity‘s public technical disclosures and reverse-engineering by independent researchers describe a three-stage pipeline:

- Retrieval. A blend of Bing, Google, and Perplexity’s own crawler returns ~100-300 candidate URLs per query.

- Passage embedding. Each candidate URL is chunked and embedded. Cosine similarity against the query embedding produces a first-pass relevance score.

- ML reranking. A learned reranker reorders candidates using freshness, source authority, citation diversity, and a structural-quality signal that disproportionately favors content with clear headings, lists, and schema.

Five tactical priorities for Perplexity citation

- Earned media on Tier-1 publications. Perplexity’s reranker structurally favors mentions on outlets with strong domain authority. A single quote in a Tier-1 article often outweighs months of self-published content.

- Freshness signals. Surface the published and updated dates in HTML, schema, and visible byline. Perplexity actively penalizes stale content for time-sensitive queries.

- Passage-level structure. Each H2 should stand alone as a complete thought. Add a TL;DR at the top of every long article.

- Numerical claims with sources. Perplexity’s reranker rewards verifiable data. Inline citations to .gov, .edu, peer-reviewed studies, and Tier-1 publications increase passage scores.

- Brand entity strength. Make sure your Wikipedia entry (if you have one), Crunchbase profile, LinkedIn page, and About page all describe the brand consistently with the same entity attributes.

What doesn’t work on Perplexity

- Keyword stuffing or generic AI-generated filler. Perplexity’s reranker is calibrated to penalize low-information density.

- Pure backlink quantity. The reranker weights link quality and entity recognition, not raw counts.

- Hidden ‘LLM-only’ content. Perplexity crawls and ranks the same content humans see.

llms.txtfiles. Perplexity has not announced support for llms.txt, and adoption studies show no measurable citation lift.

Frequently Asked Questions

How do I check if I'm cited in Perplexity?

Is Perplexity Pro worth it for SEO research?

Does Perplexity favor recent content over evergreen content?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Book a strategy call.