AI Summary

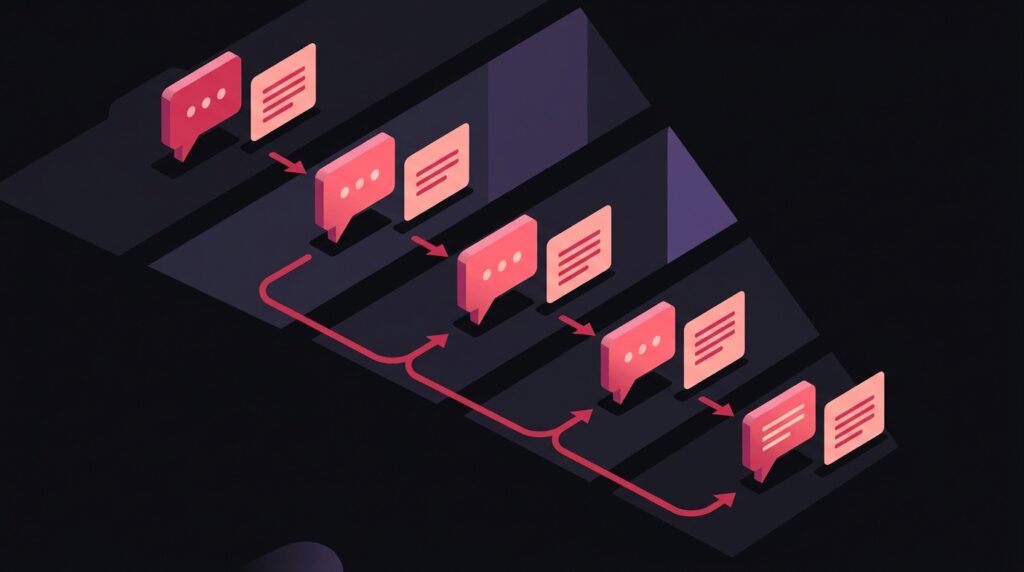

TLDR: B2B buyers in 2026 run an average of 11 AI search queries before booking a demo, up from 4 in 2024. The buyer journey now happens largely inside ChatGPT, Copilot, Perplexity, and Claude. If you only show up at the consideration stage, you’ve lost the deal. Here’s the content map for showing up at every stage.

How AI changed the B2B buyer journey

Recent industry research shows the average B2B buyer now runs 11 AI search queries before requesting a demo, up from 4 in 2024. The journey shifted from Google-and-content-marketing to AI-conversation-and-vendor-shortlist. By the time a buyer fills your demo form, the AI has already shaped their shortlist.

The implication is that brand presence in AI answers across the entire journey, not just the bottom of funnel, is now the determining factor in B2B win rate.

The 5 buyer journey stages and what AI queries each generates

- Problem awareness. ‘Why is my [metric] dropping?’ ‘What causes [problem]?’ Educational queries that frame the problem space.

- Solution exploration. ‘How do companies solve [problem]?’ ‘What approaches exist for [challenge]?’ Category-level queries.

- Vendor discovery. ‘Best tools for [category].’ ‘Top vendors in [space].’ Listicle-shaped retrieval.

- Comparison and shortlisting. ‘X vs Y comparison.’ ‘Alternatives to [incumbent].’ Specific vendor queries.

- Validation. ‘Reviews of [vendor].’ ‘Is [vendor] good for [use case]?’ Trust-confirming queries.

Most B2B brands have content for stages 3 and 4. The advantage is in covering 1, 2, and 5 with equal depth.

The stage-by-stage content map

Stage 1: Problem awareness content

Educational pillar pages on the symptoms and causes of the problems your product solves. Format: ‘why is X happening’ or ‘what causes Y’. 2000+ words. Citation goal: become the explainer.

Stage 2: Solution exploration content

Framework articles on approaches to solving the problem. Format: ‘how to solve X’ or ‘frameworks for Y’. Mention solution categories without product pitching. Citation goal: own the solution vocabulary.

Stage 3: Vendor discovery content

Pursue inclusion in third-party ‘best of’ listicles via outreach, product samples, and free trials. On your own site, build comparison content covering your category fairly. Citation goal: appear in every vendor list.

Stage 4: Comparison content

‘You vs each competitor’ pages, written fairly with honest tradeoffs. AI engines cite balanced comparisons; one-sided pages get filtered. Citation goal: own the head-to-head queries.

Stage 5: Validation content

Customer case studies, third-party reviews, ratings on G2, Capterra, TrustRadius. Encourage Reddit and LinkedIn discussion. Citation goal: when buyers verify, AI surfaces strong evidence.

Measuring AI presence across the journey

Run your top 50 buyer queries (10 per stage) through ChatGPT, Copilot, Perplexity, and Claude monthly. Score yourself: cited, mentioned, absent. Look for the missing stages first.

Use the GEO/AEO Tracker to automate this monitoring. Most B2B brands discover gaping holes at stages 1 and 5, where competitors who invested early are now permanently entrenched in the AI answer space.

The three AI engines B2B buyers actually use (and why they behave differently)

The mental model of “AI search” as one channel breaks down fast in B2B. Buyers do not run identical queries through every model. They pick the engine that matches the question, and each engine pulls from a different mix of sources. Understanding that split is the first step to showing up in the right place at the right stage of the journey.

ChatGPT is the dominant front door for top-of-funnel research. Ahrefs surveyed 879 marketers and found that 44% of respondents reported using ChatGPT, followed by Gemini at 15% and Claude at 10%. That same usage tilt shows up on the buyer side. When a B2B operator opens a chat to ask, “why is my pipeline conversion dropping,” they almost always start in ChatGPT. The model leans on its training corpus plus live web retrieval, which means citation depends on long-lived, high-authority sources that have been crawled and re-cited many times.

Perplexity behaves more like a research librarian. Buyers reach for it when they want sourced, footnoted answers they can quote in an internal Slack thread or a board update. The engine surfaces fresh content faster than ChatGPT and weights authoritative news, vendor docs, and structured data heavily. For B2B, that means published case studies, customer logos, and pricing pages get pulled in often. Google AI Overviews, the third surface, sit on top of the classic SERP and pull from results already ranking. Ahrefs found that 76% of AI Overview citations come from URLs already in the top 10, which means classic SEO ranking still gates AIO presence.

- ChatGPT: Best optimized via Reddit, YouTube transcripts, Wikipedia, and high-DR blog posts. Long-lived content compounds.

- Perplexity: Best optimized via fresh authoritative content, structured FAQs, vendor case studies, and clear citations on your own pages.

- Google AI Overviews: Best optimized via classic SEO. If you are not in the top 10, you are unlikely to be cited.

- Claude: Often used inside enterprise workflows. Picks up on policy docs, regulatory text, and detailed product documentation.

Citation share is your new top-of-funnel KPI

Pipeline reporting in 2026 is broken if it still leans on “sessions from organic search” as the leading indicator of awareness. Buyers no longer click through to read your blog before they short-list you. They ask an AI, hear or do not hear your brand mentioned, and form an opinion before any UTM ever fires. Citation share, the percentage of category-relevant prompts where your brand appears, is the metric that actually predicts demo volume four to six weeks out.

The scale of the click loss is what makes this shift urgent. Ahrefs analyzed 300,000 keywords and reported that AI Overviews reduced clicks by 34.5% on the top-ranking page when an AIO was present. That is not a minor headwind. For a B2B brand whose entire awareness funnel was tuned to organic clicks, a third of the top of funnel evaporates without a corresponding drop in actual buyer interest. The interest moved upstream into the chat, which is exactly why citation share has to replace sessions as the awareness KPI.

The AI traffic that does land on your site behaves differently too. Ahrefs reported that over 80% of their AI search referral traffic goes to just three page types: free tools, product pages, and the homepage, not blog posts. Translated to B2B, that means your category landing pages and free utilities are doing more demand capture than long-form thought leadership. If you are spending 80% of content budget on blog posts and 20% on product and tool pages, the ratio is upside down for AI search. Track which of your pages already pull citations using the GEO AEO tracker and rebalance toward whatever is actually getting mentioned.

Optimizing each journey stage for AI extraction

The five-stage map gives you the surface area. The harder question is how to format each piece so AI engines can extract and attribute it cleanly. The pattern that wins is writing for retrieval first, narrative second. Every section needs a self-contained answer, a clear noun-verb claim, and a source the model can attribute to your brand.

- Problem awareness pages: Open with a one-sentence definition of the symptom and a one-sentence cause. Models cite definitions verbatim. Bury your definition in the third paragraph and you lose the citation to whoever put it first.

- Solution exploration pages: Use ordered lists of approaches. AI engines prefer enumerated structures because they are easier to extract as ranked options. Each list item should be a complete claim, not a teaser.

- Vendor discovery pages: Build category landing pages with a clean H1 like “Best [category] tools for [ICP] in 2026” and include a comparison table. Engines pull table rows directly into responses. Make sure your own brand appears in the list with neutral language, not just competitors.

- Comparison pages: Format as side-by-side tables with named tradeoffs. Include the competitor by name in the page title. Models cite balanced comparisons; one-sided pages get filtered out as marketing.

- Validation pages: Surface specific customer outcomes with named companies and numbers. “Acme cut churn by 22% in six months” is citable. “Customers love us” is not.

One often-overlooked tactic: refresh your highest-traffic pages every quarter with the current year in the title and at least one new statistic. Ahrefs research shows 87% of marketers now use AI to help create content, and the median publisher using AI ships 17 articles per month versus 12 without. The volume race is over; the freshness race is on. AI engines weight recency for time-sensitive queries, so a 2024 article competing against a 2026 update almost always loses the citation.

Dark pipeline: why AI-influenced deals break attribution

The biggest reporting headache in 2026 is that AI-influenced deals look like direct traffic in your CRM. A buyer asks ChatGPT for a vendor recommendation, hears your brand, types your URL into the address bar a day later, and books a demo. Your last-touch attribution credits “direct” or “branded search,” not the AI conversation that actually drove the consideration. The pipeline shows up; the cause does not.

This is the same dark social problem B2B marketers have wrestled with for a decade, except now it is bigger and more centralized. Three patterns help you measure it without waiting for perfect attribution tools. First, add a single self-reported question to your demo form: “Where did you first hear about us?” with AI engines listed explicitly as options. Second, monitor branded search volume for unusual spikes that correlate with AI citation appearances; the lag is typically two to seven days. Third, run regular citation audits across the four major engines and overlay the citation count against pipeline velocity in your category.

- Self-reported source on demo form: Ugly but reliable. Adds 10% form abandonment but recovers 100% of attribution clarity.

- Branded search lag analysis: Track weekly branded volume against AI citation events. Citation appearances usually precede branded search lifts by two to seven days.

- Direct traffic to specific deep links: If your /pricing or /comparison pages get unexpected direct hits, those are likely AI-referred buyers who pasted in the URL the model gave them.

- Sales call recordings: Tag every call where the buyer mentions ChatGPT, Perplexity, Claude, or “my AI told me.” Quarterly review reveals which prompts are driving deals.

The compounding effect is real and underestimated. ProductLed reported that Cursor reached roughly $100M ARR in about 12 months and Lovable in about 8 months, both with minimal traditional marketing spend and heavy reliance on AI-driven word of mouth. Their pipeline never showed clean attribution because most of the buyer education happened in chats and Discord threads. The teams that won did not solve the attribution problem; they accepted it and tracked leading indicators of category presence instead.

What sales teams need to know about AI-prepped buyers

The buyer who books a demo in 2026 is materially different from the 2023 buyer. They have already heard your value prop summarized by an AI, compared you to two or three competitors in a chat, and formed an opinion about your pricing posture before the first call. Sales playbooks built for cold education calls do not work on this audience. The buyer arrives at minute zero already at minute thirty of the old script.

Three sales motions need to change. Discovery questions should start with what the buyer already knows, not from zero. “What did you research before this call” surfaces both the ChatGPT framing and any competitor comparisons the AI surfaced. If the AI told them something inaccurate about your pricing or features, you need to correct it in the first ten minutes, before the inaccuracy becomes a deal-killer. Demos should compress the standard product walkthrough by half and spend the saved time on the buyer’s specific use case.

- Open with knowledge audit: “What have you already researched about us?” Two minutes of listening tells you exactly which AI narrative you are working with or against.

- Pre-correct AI hallucinations: Maintain a living doc of common AI misinformation about your product (wrong pricing tiers, missing features, outdated integrations). Reps should know the top five and address them upfront.

- Compress demo, expand consultation: Buyers no longer need a 30-minute product tour. Run a 10-minute targeted demo and 20 minutes on their specific workflow.

- Provide AI-citable post-call assets: Send a short, structured recap with named outcomes the buyer can paste back into their AI for further analysis. The buyer will use it. Make sure the framing is yours.

The win rate gap between teams that adapted and teams that did not is widening every quarter. Sales leaders who treat the AI-prepped buyer as a new persona, not a sharper version of the old persona, close noticeably more deals. The pattern is consistent across SaaS categories: discovery time drops, technical objections drop because the AI already filtered them, and procurement timeline shortens because the buyer arrived with internal alignment built. The teams still running 2023 playbooks are losing to teams that retooled in the last 18 months.

Frequently Asked Questions

Should we still gate content for lead capture?

How much does AI search reduce demo request volume?

Which AI engine matters most for B2B?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Map your B2B AI presence.