AI Summary

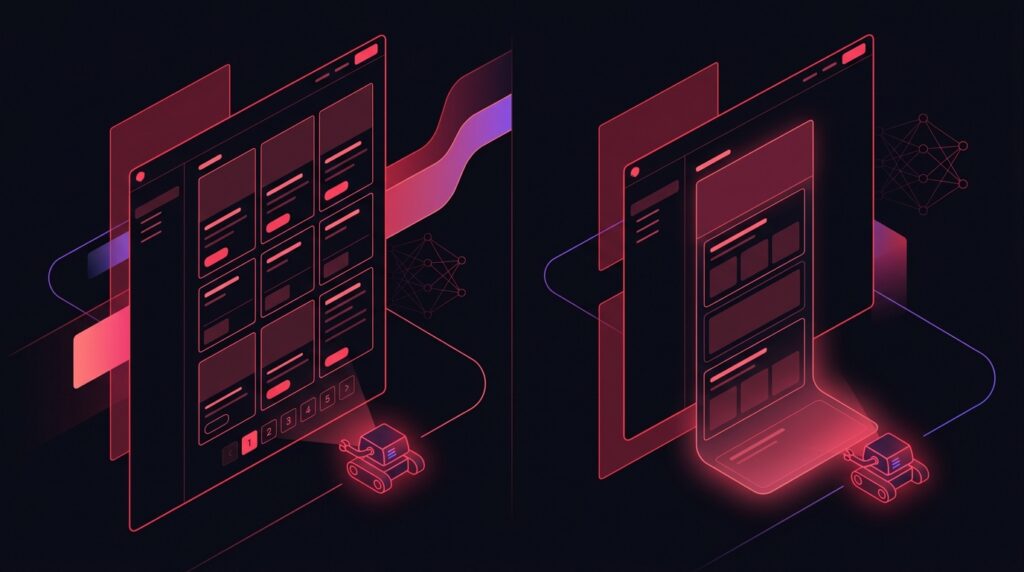

TLDR: Infinite scroll hides 60 to 90% of your archive content from AI crawlers because most crawlers do not scroll, do not trigger lazy-load events, and do not execute the JavaScript that fetches the next batch. Pagination with proper rel=next and rel=prev semantics (or just clean numbered URLs) gives every page a discoverable URL and ensures full crawl coverage. The right pattern in 2026: paginated archives for AI crawlers, optional infinite scroll layered on top for users who prefer it (progressive enhancement).

Why infinite scroll fails AI crawlers

Infinite scroll relies on user interaction (scrolling) plus JavaScript (intersection observer triggering a fetch). AI crawlers do neither reliably. GPTBot, ClaudeBot, and PerplexityBot fetch the initial HTML and parse what they see. They do not scroll. They do not wait for lazy fetches.

On an infinite-scroll archive page, the crawler sees only the first 10 to 20 items. The remaining 1,000 items in your archive are invisible. If those items represent your back catalog of articles, products, or case studies, your AI engines have no path to them.

How AI crawlers actually traverse archives

AI crawlers discover content by:

- Following links from already-crawled pages.

- Reading XML sitemaps.

- Reading the homepage and category landing pages.

- Reading internal navigation menus.

None of these support infinite scroll discovery. If a blog post is only reachable via scrolling past page 3 of an infinite-scroll archive, it is effectively invisible to AI crawlers unless it appears in the sitemap (and even then sitemaps are de-prioritised relative to discovered links).

Pagination patterns that work for AI search

Three pagination patterns are AI-friendly:

- Numbered pagination (/blog/page/2/, /blog/page/3/): Clean URL per page, fully crawlable, easy to implement.

- Cursor-based pagination (/blog?after=post_id): Slightly less SEO-friendly URL but fully crawlable if links are rendered server-side.

- Load more button (with full URL change): Acceptable if the URL updates and the new state is server-renderable.

The key requirement is that every paginated state has a discoverable URL that returns server-rendered content. JS-only state changes break this.

Progressive enhancement: best of both worlds

If your team loves infinite scroll for UX reasons, layer it on top of paginated URLs:

- Server renders /blog/page/1/ with the first 20 items and links to /blog/page/2/.

- On the client, JS intercepts the link and replaces it with a fetch that injects the next batch into the current view.

- The browser URL updates via pushState to /blog/page/2/.

- Direct navigation to /blog/page/2/ works server-side.

This pattern (sometimes called ‘pjax’ or ‘turbo’ in different frameworks) gives users the seamless infinite scroll experience while keeping pages discoverable to crawlers. Frameworks like Next.js, Hotwire, and Astro support this out of the box.

rel=next and rel=prev: deprecated but still useful

Google deprecated rel=next/rel=prev for pagination in 2019. Most teams stopped using them. But other AI crawlers (and Bing) still parse them, and they cost nothing to add. Best practice in 2026 is to include them as belt-and-braces:

On /blog/page/2/, include in the head: link rel=prev href=/blog/page/1/ and link rel=next href=/blog/page/3/. Self-referential canonical to /blog/page/2/ (not to /blog/page/1/ – that is a common mistake that hides paginated content from indexing).

The canonical mistake is the bigger issue. Many SEO plugins canonical all paginated pages to page 1. This actively hides the content of pages 2+ from search engines.

Pagination depth: how far should crawlers go?

Crawlers do not infinitely follow pagination. After 5 to 10 pages deep, the crawl rate drops sharply. For deep archives, pagination alone is insufficient. Add:

- Category and tag pages that link directly to important content.

- Year and month archive pages that crawlers can reach in 1 to 2 hops from the homepage.

- Featured content links from the homepage to specific deep articles.

- Sitemap with all URLs (XML) so crawlers can discover content not linked through navigation.

- A ‘related posts’ block on every article that links to 3 to 5 deep archive items.

These structures collapse the link distance from homepage to deep content from 10+ hops to 3 to 4 hops, which dramatically increases crawl coverage.

E-commerce pagination: special considerations

Product listings have unique pagination needs:

- Avoid faceted filter URLs that explode into millions of URL combinations – use canonical to the unfaceted version.

- Use server-rendered pagination for the main category pages.

- Allow client-side filtering on top of server-rendered pages (so users get fast filtering without breaking crawlability).

- Limit pagination depth via rel=prev/next or by surfacing top-products on category landing pages.

E-commerce sites that get this wrong end up with millions of crawled URLs of nearly identical filtered listings, which dilutes crawl budget away from actual product pages.

Frequently Asked Questions

Will Google penalise infinite scroll?

Can I use 'Load More' button instead of infinite scroll?

How many items per paginated page is ideal?

Should I noindex paginated pages past page 1?

Does pagination affect Core Web Vitals?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Audit your archive pagination.