AI Summary

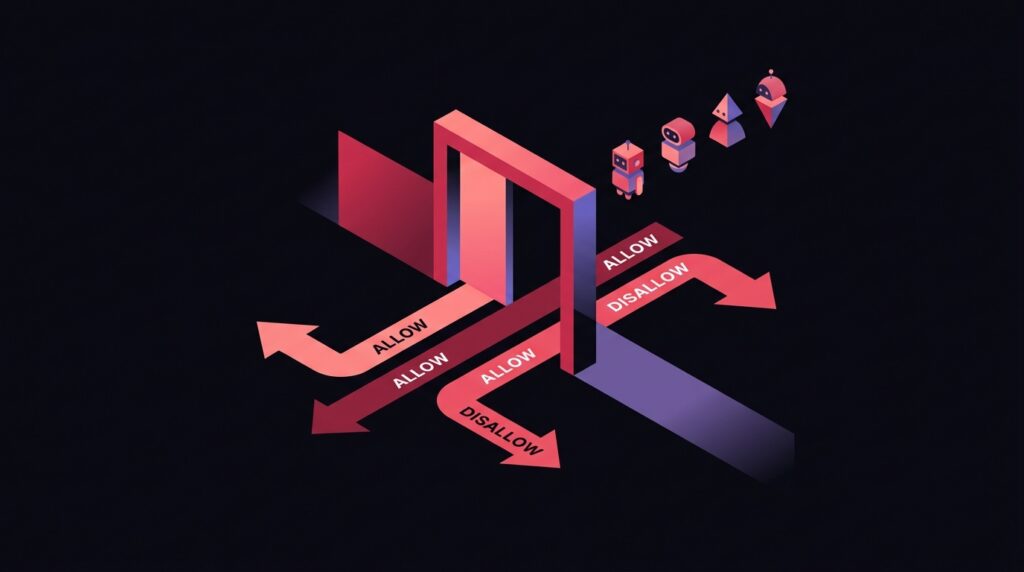

There are now over 30 distinct AI-related crawlers hitting your site. Some retrieve content for live AI answers (and citing you in the process). Some scrape for model training (with no citation in return). Some do both. The robots.txt decisions you make in 2026 either compound your AI visibility or quietly cut you out of half the citation ecosystem.

The 4 categories of AI crawler

- Search-time retrieval crawlers. OAI-SearchBot, PerplexityBot, Google-Extended (when used by AI Overviews). These are the ones that get you cited. Block them and you disappear from AI search results.

- Live user-fetch agents. ChatGPT-User, Perplexity-User, Claude-User. These fetch a page in real time when a user asks a question that requires fresh data. Blocking these makes you invisible for live queries.

- Training crawlers. GPTBot, ClaudeBot, Google-Extended (when used for model training), CCBot (Common Crawl, used by everyone). These ingest content for future model training. No direct citation benefit; brand-strategy decision.

- Misclassified or hostile bots. Anthropic’s old anthropic-ai, scraping bots impersonating legitimate ones. These need separate handling.

The default-allow stance

For most brands, the right default is to ALLOW search and live-fetch crawlers, and ALLOW training crawlers as well. Three reasons:

- Citation upside outweighs training-data downside in almost all categories.

- Training-data ingestion compounds your entity recognition across future model versions.

- Blocking training crawlers does not actually remove you from training data because Common Crawl ingests you anyway and feeds many models.

When blocking training crawlers makes sense

- Paywalled or members-only content. If your business model depends on gated access, blocking training crawlers is a legitimate defense.

- Original research and proprietary data. Independent research firms often block training crawlers to preserve the value of their data.

- Image-heavy creative work. Photographers, illustrators, and designers have legitimate concerns about training-data appropriation. Blocking GPTBot via robots.txt is reasonable.

- Legal or regulatory restrictions. Some jurisdictions and contracts require opt-out from AI training.

Even in these cases, allow the search-time crawlers (OAI-SearchBot, PerplexityBot) so you remain discoverable.

The robots.txt template that handles 2026

A practical default for a brand that wants maximum AI visibility:

User-agent: *

Allow: /

User-agent: GPTBot

Allow: /

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: Claude-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Perplexity-User

Allow: /

User-agent: Google-Extended

Allow: /

Sitemap: https://yoursite.com/sitemap.xml

If you want to block training only:

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: CCBot

Disallow: /

# Still allow search-time

User-agent: OAI-SearchBot

Allow: /

User-agent: PerplexityBot

Allow: /

Beyond robots.txt: meta tags and HTTP headers

noaiandnoimageaimeta tags. Less universally honoured than robots.txt but signal opt-out for compliant scrapers.X-Robots-TagHTTP header. Apply to non-HTML resources (PDFs, images) where meta tags don’t fit.- Cloudflare AI Audit and similar services. Provide per-bot allow/block at the CDN edge with logging. Useful for high-traffic sites.

Auditing what your robots.txt actually does

- Pull your server access logs from the last 30 days. Filter for known AI user-agents.

- Check which crawlers actually hit you and at what frequency. Verify your robots.txt rules match your intent.

- Test reachability per crawler with curl:

curl -A 'OAI-SearchBot' https://yoursite.com/robots.txt. - Spot-check that your most important pages return 200 to all allowed crawlers.

- Re-audit quarterly as new crawlers emerge.

Frequently Asked Questions

Will blocking GPTBot remove me from ChatGPT entirely?

Should I block CCBot (Common Crawl)?

Are robots.txt rules legally binding on AI companies?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Audit your AI crawler footprint.