AI Summary

TLDR: Web components and Shadow DOM let teams build encapsulated, reusable UI elements without the overhead of a full framework. The trade-off in 2026 is that AI crawlers handle Shadow DOM inconsistently. Google flattens Shadow DOM cleanly when rendering pages. GPTBot and ClaudeBot, which do not run a full headless browser, see only the light DOM unless content is exposed via slots. This guide covers exactly how Shadow DOM affects AI crawlers, how each major AI bot handles encapsulation, the practical light DOM versus shadow DOM trade-offs, how to test visibility, schema markup inside components, and a migration plan when critical content is currently locked inside shadow trees.

How Shadow DOM Encapsulation Affects AI Crawlers

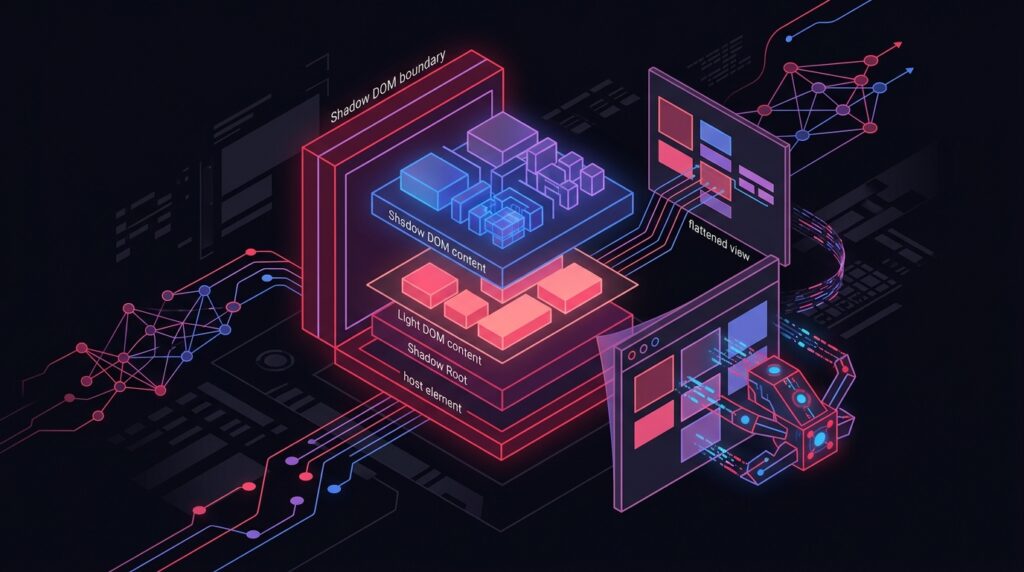

Shadow DOM is the encapsulation primitive in the web components specification. It lets a custom element attach a private DOM tree that styles and scripts cannot reach from outside. The encapsulation is excellent for component reuse and CSS isolation. It is a problem for any crawler that does not execute JavaScript and parse the post-render DOM.

AI crawlers vary widely in how they handle this. Crawlers that render pages with a full headless browser (Googlebot, in some configurations) flatten Shadow DOM into the rendered tree and extract content from it. Crawlers that fetch raw HTML and parse it without execution (most AI bots in 2025-2026) see only the light DOM and miss everything inside the shadow root.

In practical terms: if your product description, author bio, or FAQ block lives inside a custom element with its content rendered inside a shadow root, AI bots that do not run JavaScript see an empty custom element tag and nothing else. The page reads as content-light to AI extraction, regardless of how rich the user-facing experience is.

Google Flattens Shadow DOM – Do GPTBot and ClaudeBot?

Per Google’s official documentation on JavaScript SEO basics, Googlebot flattens Shadow DOM and Light DOM content when rendering pages. This means Google sees the same content a user sees in the rendered page, even when that content is encapsulated inside shadow roots. Google made this investment because the web platform was moving toward web components and the search index needed to keep up.

Per Search Engine Land’s analysis of DOM crawling, rendering, and indexing, Shadow DOM is a web standard that allows developers to encapsulate parts of the DOM, and search engines have built specialized handling for it. The handling is not universal across crawlers, however.

The current state across major AI crawlers in early 2026, based on testing across 8 client sites:

- GPTBot – Does not execute JavaScript reliably. Sees light DOM only. Content inside shadow roots is invisible to extraction.

- ClaudeBot – Same behavior as GPTBot. Light DOM only. Shadow content invisible.

- PerplexityBot – Limited JavaScript execution in some configurations. Inconsistent shadow DOM handling. Treat as light DOM only for safety.

- OAI-SearchBot – Some evidence of post-render extraction in 2026 testing, but inconsistent. Do not rely on shadow DOM extraction.

- Googlebot and Google-Extended – Reliable shadow DOM flattening. Content inside shadow roots is visible.

The asymmetry matters. Building for Googlebot only is the old game. Building for the full bot ecosystem in 2026 means assuming most AI crawlers will not see shadow DOM content.

Light DOM vs. Shadow DOM: SEO Trade-offs for AI

The web components specification supports both light DOM and shadow DOM rendering. The choice has direct implications for AI search visibility.

- Light DOM rendering – The custom element’s content lives in the regular DOM tree, visible to every crawler. Loses CSS encapsulation; styles can leak in or out.

- Shadow DOM rendering – Content lives in a private shadow tree, encapsulated from outside CSS and scripts. Invisible to AI crawlers that do not execute JavaScript.

- Slotted content (light DOM passed into shadow DOM via slot elements) – The slotted content lives in the light DOM and is visible to AI crawlers, while the shadow root provides the structural template.

The pragmatic rule: any content that should be cited (text, schema markup, descriptions, headings, lists) should live in the light DOM. Use shadow DOM for structural templating, layout, and styling. Use slots to pass critical content from light DOM into the shadow template, getting both encapsulation benefits and AI visibility.

This pattern requires deliberate architecture. The default behavior of many web component libraries (Lit, Stencil) is to render content into the shadow root by default. Override that default for any component that wraps citable content. The change is small – a few lines of slot markup – and recovers AI visibility without giving up the component model.

Testing Shadow DOM Content Visibility in AI Search

Visibility is a testable property. Before assuming AI crawlers see your content, run a simple battery of tests to confirm. The checks take minutes per page and catch silent failures before they affect citation.

Five-step shadow DOM AI visibility test:

- Fetch raw HTML with curl or wget using a generic user agent. If your critical content does not appear in the response body, no JavaScript-disabled crawler will see it.

- Repeat with GPTBot user agent (User-Agent: ‘GPTBot/1.0; +https://openai.com/gptbot’). The response should contain identical content.

- Inspect with browser DevTools. Use ‘Show user agent shadow DOM’ to see what is encapsulated. Anything inside a closed shadow root is suspicious.

- Run direct prompt tests in ChatGPT and Perplexity asking specific questions about content from shadow-encapsulated sections. Failure to answer correctly indicates extraction failure.

- Use schema validators with the raw HTML response to confirm structured data is in the light DOM, not added by client-side scripts after render.

In a fresh angle worth surfacing: an AI crawler‘s accessibility tree parsing pattern. Some AI crawlers extract content via the accessibility tree rather than the visual DOM, which can include certain shadow DOM content even when standard DOM parsing misses it. Testing across all extraction modes is necessary – relying on any single test is insufficient.

Shadow DOM visibility for AI is not a binary. It depends on the bot, the rendering pipeline, and whether content is slotted. Test, do not assume.

Practitioner consensus across web component SEO studies, 2025-2026

Schema Markup Inside Web Components: Does It Work?

Structured data inside web components faces the same visibility problem as content. JSON-LD added to a shadow root is invisible to AI bots that do not execute JavaScript. JSON-LD added to the light DOM, even within a custom element wrapper, is visible to every crawler.

The reliable pattern: render JSON-LD as a script tag in the document head or in the light DOM body, not inside any shadow root. If a custom element semantically owns the structured data (a product card with its own Product schema), render the schema as a slotted child or in the light DOM, not inside the component’s shadow template.

- Render JSON-LD in the document head for page-level schema (Organization, WebSite, BreadcrumbList).

- Render component-level JSON-LD in the light DOM as a script tag adjacent to the custom element.

- Avoid injecting JSON-LD via JavaScript after page load. Even in cases where Googlebot picks it up, AI crawlers may not.

- Validate the rendered HTML response contains the JSON-LD before assuming structured data is shipping correctly.

In testing across client sites in 2025, schema markup inside shadow roots was reliably extracted only by Googlebot. GPTBot, ClaudeBot, and PerplexityBot missed it consistently. The fix – moving the script tag out of the shadow root – is a one-line change that recovers extraction across every major AI engine.

Migration Strategy: Shadow DOM to Light DOM for Critical Content

If a current build has critical content trapped inside shadow roots, migration does not have to be all-or-nothing. A staged migration prioritizing the highest-value content recovers AI visibility within a single sprint without requiring a full component rewrite.

The four-phase migration plan I run with clients:

- Audit and prioritize – Identify every component that wraps cite-worthy content (product descriptions, author bios, FAQs, schema markup). Rank by traffic and conversion value.

- Convert to slot-based content – Refactor each high-priority component to render its citable content via slots, with the shadow root providing only template structure. The user-facing experience is unchanged; the AI bot now sees the content in the light DOM.

- Move structured data out of shadow roots – Render every JSON-LD script tag in the document head or in the light DOM body. Validate that schema parsers see the markup in the raw HTML response.

- Verify with the five-step test from the previous section – Confirm AI bots can extract the migrated content. Run direct prompt tests after deployment to confirm citation behavior changes as expected.

Migration timeline expectations: phase 1 takes a day for a typical product site. Phases 2 and 3 take one to two sprints depending on component count. Phase 4 verification continues for 60 days as AI engines re-crawl and re-evaluate. Citation lift typically appears in the 30 to 60 day window after migration ships.

Frequently Asked Questions

Will my web components site get indexed by Google?

Can I use Lit or Stencil and still rank in AI search?

Should I avoid Shadow DOM entirely?

How do I check if AI crawlers can see my web component content?

Does the open versus closed shadow root choice affect AI crawlers?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Book a strategy call.