AI Summary

Even GenAI uses Wikipedia as a source, and the structured data behind Wikipedia is Wikidata. Google’s Knowledge Graph sources 30-40% of its entity data directly from Wikidata. Every major LLM, GPT-4, Claude, Gemini, Llama, uses it for entity disambiguation and fact verification. And 68% of brands that appear in Google Knowledge Panels but lack Wikipedia articles have a Wikidata entry as their primary data source. This is the most underinvested 30-minute SEO task in the industry.

What is Wikidata and why does every AI engine use it?

Wikidata is the machine-readable structured database that powers Wikipedia’s infoboxes, and far more. It contains 100+ million entities (Q-codes) with structured facts in machine-readable format, licensed as CC0 public domain. Unlike proprietary knowledge graphs (Google’s, Amazon’s), anyone can read, edit, and query Wikidata via its SPARQL API.

The architecture uses:

- Q-codes: Unique IDs for every entity (Q95 = Google Inc., Q312 = Apple Inc.)

- P-codes: Properties describing relationships (P31 = “instance of, ” P856 = “official website”)

- Statements: Structured facts linking Q-codes via P-codes with references (URLs, publications)

- Qualifiers: Context for statements (e.g., “founded by Larry Page” with start time 1998)

Kevin Grillot’s August 2025 analysis explains the AI dependency: “In 2025, it becomes imperative to integrate Wikidata into your digital strategy, particularly to improve presence in Google results, in AI assistants like ChatGPT, and in voice search systems. Wikidata is the hidden infrastructure of the AI knowledge economy.”

Wikidata is updated every 15 minutes via bots and human editors. Changes can reflect in Google’s Knowledge Graph within 24-72 hours, faster than most other entity data sources.

The 5 highest-impact Wikidata facts for SEO

- sameAs links (external IDs). Wikidata’s external ID properties, LinkedIn (P4264), Crunchbase (P2087), Twitter (P2002), GitHub (P2037), Google Knowledge Graph ID (P2671), are the #1 signal Google uses to verify brand entity connections. These sameAs links tell every AI engine “all these profiles are the same entity.” (Sunil Pratap Singh)

- Official website (P856). This is the property Google uses to connect a Wikidata Q-code to a domain. Without it, your website has no machine-verified entity connection.

- Instance of (P31). Declaring your brand as “business” (Q4830453) or “organization” (Q43229) tells AI engines what category of entity you are, this affects how they describe and cite you.

- Industry/field of work (P452). Declaring your industry helps AI engines understand what queries your brand is relevant to.

- Property completeness score. Rajesh R Nair’s analysis found entities with 30+ filled properties rank 4.1x higher in AI citation confidence scores vs. entities with fewer than 10 properties.

ReputationX’s 2026 guide makes the zero-Wikipedia case clearly: “WikiData gives Google the structured brand signals it needs to build your Knowledge Panel, even if you have no Wikipedia article to your name. We’ve helped dozens of B2B SaaS companies get Knowledge Panels using only Wikidata.”

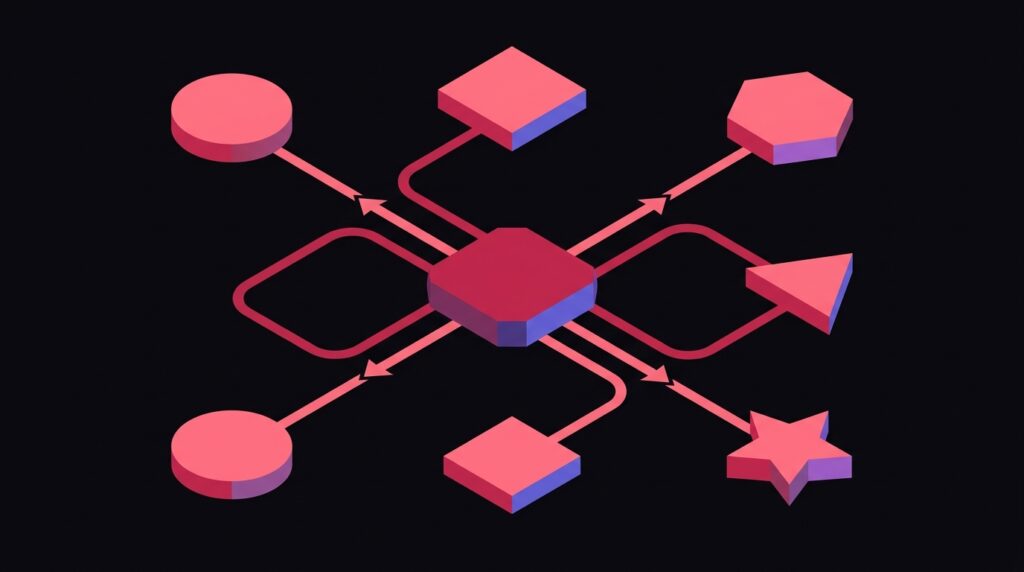

How Wikidata fits into the AI entity stack

The full entity recognition flow for AI search engines works like this:

- User searches for or mentions your brand name in an AI prompt.

- AI engine resolves “brand name” to an entity using its internal knowledge graph.

- Knowledge graph checks Wikidata Q-code for authoritative properties (official website, industry, founded date, sameAs links to social profiles).

- AI engine cross-references these properties against your website’s Schema.org markup (Organization sameAs should match Wikidata sameAs).

- If they match, AI engine cites you with confidence. If they don’t match, AI may hallucinate or decline to cite.

This is why the sameAs entity disambiguation guide emphasizes consistency across every platform, your website’s sameAs links and your Wikidata sameAs links must be identical. Inconsistency is what causes AI hallucinations about brand facts.

The full entity stack, Wikidata, Schema.org, Google Knowledge Panel, LinkedIn, Crunchbase, is covered in the entity SEO for brand AI recognition guide and the knowledge graph entity authority playbook.

Step-by-step: creating and optimizing your Wikidata entry

Here is the minimum viable Wikidata entry for a brand seeking AI search visibility:

Step 1: Create your Q-code

Visit wikidata.org, create an account, and create a new item. Required properties to add immediately:

- P31 (instance of) → Q4830453 (business) or Q43229 (organization)

- P17 (country) → your country’s Q-code

- P856 (official website) → your domain

- P571 (inception/founding date)

- P159 (headquarters location)

Step 2: Add sameAs external ID properties

- P4264 → LinkedIn company URL

- P2087 → Crunchbase organization URL

- P2002 → Twitter/X handle

- P2013 → Facebook page URL

- P2037 → GitHub organization (for tech companies)

Step 3: Add references to every statement

Every fact you add must have a reference, a URL (press release, annual report, Crunchbase page) that proves the fact. Unreferenced statements get flagged for deletion by Wikidata’s editorial community.

Step 4: Create people entities for key team members

Discovered Labs recommends creating Wikidata entries for your CEO and founders with P108 (employer) → your brand Q-code and P39 (position held) → their role. This creates bidirectional entity relationships AI engines trust.

Step 5: Add multilingual descriptions

WikiConsult reports that brands with multilingual descriptions get 3.2x more international citations. Translate your 1-sentence brand description into English, Spanish, French, German, Chinese, Japanese, and Portuguese minimum.

The brand entity and Wikipedia strategy overlap is covered in the Wikipedia entity strategy and brand mentions guide and the brand entity optimization playbook. Wikidata and Wikipedia are separate but complementary, a Wikipedia article automatically syncs data to Wikidata, but Wikidata entries can exist independently.

Advanced: using SPARQL to find optimization gaps

The Wikidata Query Service (query.wikidata.org) lets you run SPARQL queries to analyze your competitive set. A practical approach:

- Find all Wikidata entries for companies in your industry (e.g., P452 = software company, P17 = United States)

- Compare which properties your competitors have filled that you haven’t

- Prioritize filling those gaps, especially any properties that appear on 80%+ of competitor entries

This gap-filling approach is the Wikidata equivalent of competitor content gap analysis in traditional SEO.

WikiConsult’s 2025 guide captures the strategic framing: “Wikidata data is structured to be understood by search engines. It’s the difference between ‘this website mentions X’ and ‘this is definitively X’s official site.'”

Frequently Asked Questions

Can I get a Google Knowledge Panel without a Wikipedia article?

How do I claim my Google Knowledge Panel after creating a Wikidata entry?

Can competitors vandalize my Wikidata entry?

Does Wikidata affect ChatGPT's knowledge about my brand?

Want this implemented for your brand?

I help growth-stage companies own their category in AI search. Build your brand entity stack.